Why does this matter? The obvious answer is that, despite the dramatic decline in disk costs over the past couple of decades, disk is still not free. Beyond that, there are performance implications to keeping more data on hand than necessary. Database backup tasks and batch jobs that run against the whole database take longer. Response times for online queries may also become unacceptable as the queries sift through excessive obsolete data.

Furthermore, more data is not always better than less data. Very old data may mislead business planners and strategists because trends are discontinuous. New product introductions by the company or its competitors, changing consumer tastes, new business models, new technologies, and other factors alter customers' buying behaviors, internal inventory flows, and other operating characteristics. Inferring and extrapolating trends using information that spans too long a time may be not only a slow process because of the volume of data, but also a misleading one.

Data Manager tackles this problem by moving old data from the primary database to either a secondary database on disk or off to tape. It is, in effect, an archiving engine. You provide it with a model that defines your databases and your archiving policies. On an ongoing basis, it then automatically archives data according those policies, without the need for operator intervention.

For example, you might tell Data Manager to move "old" data—data that is not needed on a regular basis, but which still may be required on short notice—from the primary database to a reference database on disk. You can also have it move "older" data from the reference database onto tape in order to reduce disk costs. Alternatively, you might set up just a single step from the primary database directly to tape. You define "old" and "older" in the model.

Comprehensive Archiving Policies

The "old" and "older" concepts are not as straightforward as they might seem. For example, you might want to archive all purchase orders more than three years old, but what does "three years old" mean? Standing purchase orders may be open for months or even years, and the various line items on one-time purchase orders may be shipped individually over a span of months. You don't want to archive an open purchase order or one that may still need to be referenced because it was filled only two months ago and it or the payment of it may still be open to dispute. Data Manager allows you to establish detailed archiving policies that accommodate these issues. For example, you can have it archive purchase orders that were created more than three years ago, but only if they are completely closed and nothing has been received on them for, say, at least six months.

Data Manager also honors all referential integrity rules. Even if a record meets all of the other archiving policies, Data Manager will not archive it if dependent records exist that are not scheduled for archiving.

To help ensure compliance with data security and management accountability regulations, Data Manager maintains a complete audit trail of all actions it performs. This audit log shows not only where Data Manager moved data, but also when and how the archiving criteria were changed over time.

ERP Database Archiving

ERP applications often provide purging and archiving features, but these features are usually not comprehensive, and worse, they normally require that the system be shut down while they run. In contrast, Data Manager provides comprehensive purging and archiving functionality. And Data Manager's processes can be run while systems are active. Many organizations find that this benefit alone delivers a return on their investment almost immediately.

Working with Data Manager is simple. First, you load the archiving model. Models have been built for most of the major ERP packages, including BPCS, MOVEX, System

21, World, and OneWorld, and a growing number can even be downloaded from Vision's Web site. These models can then be customized to accommodate your specific archiving policies and any tailoring that your organization performed on the ERP. Alternatively, models can be easily built from scratch.

Archive Modeling

This is not to suggest that Data Manager is only for environments running ERP software. Data Manager is entirely application-independent. Its archiving model is simply a means of defining files and links between files, archiving and purging policies that are to be applied to them, and identifying any unusual conditions that require exceptions to those policies. The model is completely independent and not directly aware of the applications that use the files that are included in it.

Archive Process Control functionality in Data Manager creates, in effect, development and test environments for the archiving model. You can build new models or modify existing ones in the development environment without affecting the purging and archiving activities going on in the production environment. Those activities continue to follow the policies in the existing, proven model. When you have finished creating or modifying a model and have thoroughly tested it, you can promote it to the production environment. Only then will the policies in the model under development replace those in production.

Hierarchical Archiving

Data Manager can implement either two-tier or three-tier archiving models. All IT people are familiar with the two-tiered model. Under it, old data is sent to tape and deleted from disk. The archived data can be retrieved from tape if necessary, but doing so is a very long and, assuming the tape cartridge is not mounted in the drive or automated tape library, labor-intensive process. Consequently, old data is often not archived until the IT staff is fairly certain that it will never, or at least exceptionally rarely, be called for again.

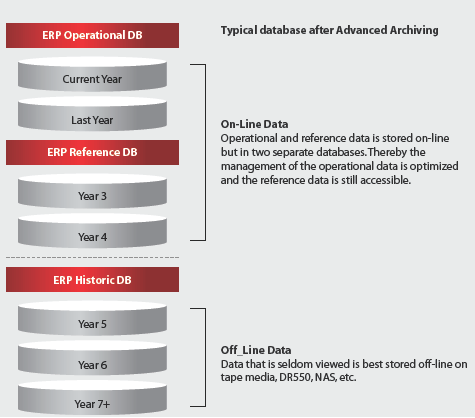

Figure 1: Here's an example of a three-tiered hierarchical archiving model. (Click image to enlarge.)

A three-tier model includes the option of archiving data to disk. For example, data that will not be needed for operational purposes but is still required for reporting and analysis purposes is archived to a reference disk rather than to tape. Doing so gets the data off the operational database so it doesn't slow down production applications but keeps it quickly available when needed for other purposes.

Older data, typically data that is obsolete but must still be kept for legal purposes or "just in case" is moved off disk completely and onto tape, just as it would be in the two-tiered model.

The three-tier model does not require that data always follow this two-step process. Depending on the nature of the data, you might specify that some of it is to move from the primary database to the reference disk once it reaches a certain age and then later onto tape, while other data may move directly from the primary disk to tape.

Copying Without Deleting

Data Manager allows you to copy data without deleting it from the primary database. This can be helpful when testing your model as you can defer the purging of production data until you are completely confident that the archiving rules are correct. Even then, there is no threat to your data as Data Manager allows you to easily restore archived data to the production database.

The ability to copy but not delete selected data provides an ancillary benefit. You can use this feature to easily create high-quality, up-to-date test databases with referential integrity fully intact. This allows developers to test new and updated applications under current real-world conditions.

Ease of Use and Administration

Vision Solutions has put considerable work into minimizing the administrative load required to install and run Data Manager. A simple, query-like interface allows you to model your purge and archive plan without the need for programming skills.

Data Manager is implemented in conjunction with Director, an integrated set of applications that proactively monitors, manages, and optimizes System i servers, databases, and application environments. By combining Data Manager with Director, you can free up more space by using the reorganization-while-active functionality, gain greater visibility into your System i, and keep your System i optimally tuned.

Data Manager and Director come with self-installing engines and, installed in this combination, they demonstrate their heritage in being built on the eight industry-standard pillars of autonomic computing as described below:

- Self-Aware—It manages itself with respect to resources, configurations, and parameters.

- Self-Configuring—It enables self-installation, -set up, and -configuration.

- Self-Optimizing—It monitors and manages itself, adjusting to the business needs and priorities.

- Self-Healing—It detects, anticipates, and can recover from routine and extraordinary events.

- Self-Protecting—It detects, identifies, and responds to anomalies in the system to maintain overall system performance and integrity.

- Self-Adapting—It is aware of itself and its environmental context and will manage its own resource allocation.

- Self-Managing—It monitors its system resource requirements and will regularly manage resource usage for optimal performance.

- Self-Anticipating: It understands its environment and forward resource requirements for various tasks, constantly "looking ahead" to ensure that tasks can be started and completed within defined windows of processing time or will reschedule as appropriate to optimize system use for the business.

For more information about Data Manager, contact Vision Solutions at one of the addresses below.

Vision Solutions, Inc.

17911 Von Karman Ave.

Irvine, CA 92614

Tel: 949.253.6500

Fax: 949.253.6501

Web: www.visionsolutions.com

Email:

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment.

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment. TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now.

TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now. Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works.

Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works. Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include:

Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include: Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new.

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new. IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

LATEST COMMENTS

MC Press Online