Anyone who has ever worked with DOS or Windows is familiar with the pleasure (?) involved with disk partitioning. In these worlds, the disk can be split into one or more pieces, called partitions, to which the operating system assigns a drive letter. The first partition is traditionally the "C:" drive, with subsequent partitions getting the next sequential letter (this behavior can be altered via the OS tools). In the UNIX/Linux world, a disk can likewise be split into pieces, but unlike DOS/Windows, the file system is a tree structure, with each disk partition being "mounted" into a particular place in the tree. There is no concept of drive letters in *nix. Figure 1 shows a typically partitioned hard drive. No matter what operating system is used, the challenge is to decide how many partitions to create and how large to make each piece.

Figure 1: This drawing represents the age-old division of a single disk drive into a number of partitions. (Click images to enlarge.)

Many variables are involved in the planning process and failure to "get it right" could result in lots of wasted space (disk used to be quite expensive) or running out of space prematurely. Historically, all of these operating systems suffer the same problem when a given partition fills to capacity: How do you add more? The traditional responses to a critical storage problem include replacing the current drive with one of greater capacity or adding more drives. Both of these solutions require juggling existing data and typically require performing configuration changes to existing applications. Worse still, your maximum partition size is limited to the size of the largest hard drive unless your system is equipped with a RAID controller, which allows you to merge the capacities of drives in a RAID 0 configuration.

Logical Volume Management (LVM) eliminates these problems by providing a level of abstraction between the physical drives (and their capacities) and the operating system. Drive space can be added easily, and, with hot-swap hardware, on the fly while the system is running. Best of all, LVM is equally applicable to the virtual hardware you get when using VMWare or LPAR as it is with real hardware. Virtually all modern Linux distributions are LVM-ready, and the enterprise-level distributions automatically set up on your system are LVM-based, should you choose auto-partitioning during system installation.

While I have heard and read comments that LVM is confusing, you have to deal with only three simple components, which I'll discuss next. Once you get the hang of LVM, you'll never want to go back to a plain disk setup.

Basic Building Block

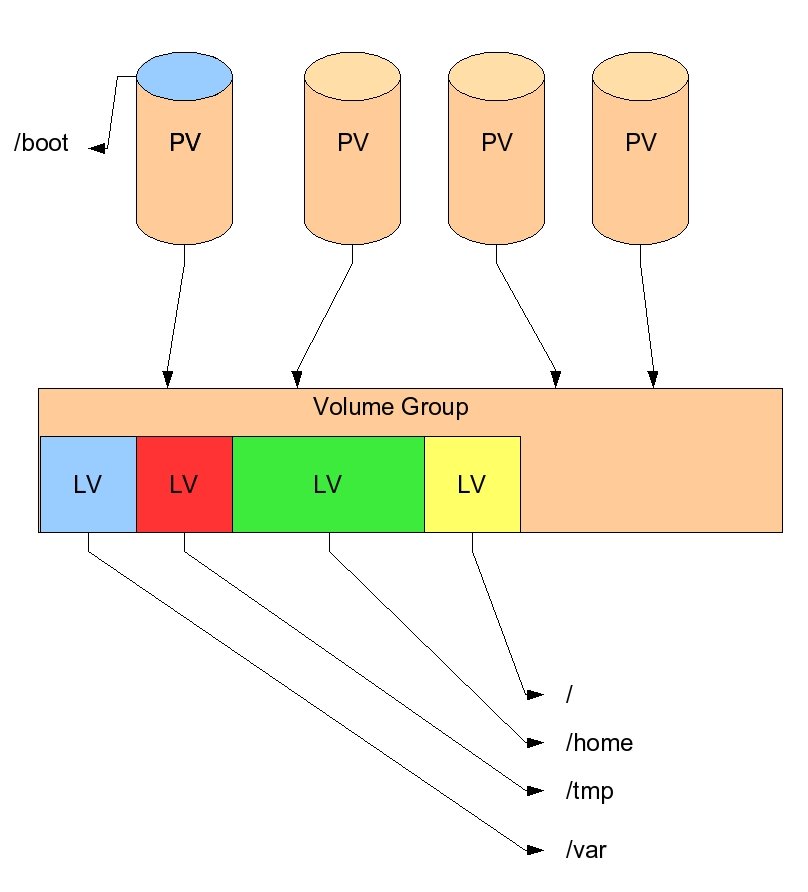

The whole of LVM consists of three components, as shown in Figure 2. The first is the basic building block of LVM, called the physical volume (PV). A physical volume can be partitions of an actual hard drive (which has its type ID set as the hex value '8e'), an entire hard drive, or certain metadevices, such as iSCSI. To prepare a physical volume for LVM, you need only issue the command pvcreate partition_or_device_name, which writes LVM metadata to it.

Figure 2: LVM creates a pool of space from which individual partitions (called logical volumes) can created and dynamically resized.

On my laptop (one 100Mb IDE drive), I have the following physical volumes (the pvdisplay command shows the physical volume information available to the system):

--- Physical volume ---

PV Name /dev/hda5

VG Name VolGroup20050306

PV Size 53.59 GB / not usable 0

Allocatable yes

PE Size (KByte) 32768

Total PE 1715

Free PE 64

Allocated PE 1651

PV UUID w7BBJd-qNnk-L4sS-wAx8-bB8m-81bi-MBixOP

--- Physical volume ---

PV Name /dev/hda6

VG Name VolGroup20050306

PV Size 37.25 GB / not usable 0

Allocatable yes

PE Size (KByte) 32768

Total PE 1192

Free PE 68

Allocated PE 1124

PV UUID An6EFC-Bnd8-qLGm-tzWP-LZ4w-1fLA-f7FVOo

The drive is partitioned this way:

255 heads, 63 sectors/track, 12161 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/hda1 1 154 1236973+ b W95 FAT32

/dev/hda2 * 155 167 104422+ 83 Linux

/dev/hda3 168 298 1052257+ 82 Linux swap

/dev/hda4 299 12161 95289547+ 5 Extended

/dev/hda5 299 7296 56211403+ 8e Linux LVM

/dev/hda6 7297 12161 39078081 8e Linux LVM

You may be wondering why I have two physical volumes (Linux LVM) on this drive instead of simply one large one. Good question! The reason is that the partition /dev/hda6 was being used for other things (another Linux installation). When I was able to clear it, I wanted to add it to my main Linux installation. Setting it as a physical volume was the easy way to include it, as you will see shortly.

An Amalgamation

Once you have created one or more physical volumes, you need to create a volume group (VG). The volume group is really nothing more than the amalgamation of one or more physical volumes, which then will be carved up into partition-like entities called logical volumes. You need at least one volume group for LVM, but you can create as many as you need. This is the simplified command to create a volume group:

vgcreate vg_name partition_or_device_name_1 [partition_or_device_name_n]

So for my laptop example, I would have used this:

vgcreate VolGroup20050306 /dev/hda5 /dev/hda6.

In reality, I'm not being completely truthful as I actually extended the existing group at a later time (I'll be bringing that up shortly, too), but the example command would do the trick.

For those unfamiliar with the *nix way of naming devices, you'll note that the partitions I enumerated were /dev/hda5 and /dev/hda6. Device files (and in *nix, everything is a file) are stored in the file system under the /dev directory. The "hda" portion is the master drive on the first IDE channel. The slave would be "hdb" and so forth. The digit is the partition number, which you can ascertain by looking at the partition information produced by the fdisk command (shown above). Once your volume group is created, you'll find it under the /dev directory, like any other device. Thus, the newly created volume group shown in my example is /dev/VolGroup20050306.

Logical Volumes

Once we have created our volume group, we have what amounts to an oversized hard drive. To make it useful, we need to carve it into pieces, called "logical volumes," that are similar to partitions on a hard drive. The command to do that is simple:

lvcreate -n name -L size volume_group

If I wanted to create a new 250 Mb logical volume (partition) called "test" on my laptop, I would issue this command:

lvcreate -n test -L250M /dev/VolGroup20050306

Once the command completed, the volume appears under the /dev/VolGroup20050306 directory: /dev/VolGroup20050306/test. Once created, you treat the logical volume as you would any partition: You must first format it (mkfs.ext3 /dev/VolGroup20050306/test), and then you need to mount it into the file system tree (mkdir /test ; mount /dev/VolGroup20050306/test /test). To automatically mount this logical volume during system startup, you would edit the file /etc/fstab and add the appropriate entries.

Impossible to Do

Other than allowing you to assemble many small disk drives into what appears to be one large one (something that RAID 0 will do), what makes LVM special? For one thing, it's easy to enlarge a logical volume. Let's say that the "test" logical volume I created earlier is running out of room, and I'd like to double its size. Easily done! First, I extend the logical partition:

Then, I extend the file system hosted there. For the ext2/ext3 file systems, the command is ext2online /test. Please note that I did this with the system live and with the file system mounted. That's something that's impossible to do unless you're using LVM.

Path of Least Resistance

Enlarging a logical partition depends of course on your having unused space in your volume group. I usually create my logical partitions such that the sum of all of their sizes is less than the total size of the volume group. This gives me some breathing room as the partitions fill. Eventually, though, I do find myself in need of more space. (Who doesn't?) Since I'm loathe to clean out old data (who isn't?), I always tread the path of least resistance: I buy more drives! Once the drive is installed in the system, which can be live if your hardware supports it, it's a trivial matter to extend your volume group. To do so, you need to prepare it to be a physical volume:

Then add it to the volume group:

In my example, let's assume that I have added a drive identified as /dev/hdd. I'd simply issue pvcreate /dev/hdd, then vgextend VolGroup20050306 /dev/hdd. Instantly, I'd see that the new volume group size is larger by roughly the size of the new drive. Now I can extend the logical volumes as needed.

But what if there is no space to add additional drives in your computer's chassis? All is not lost, especially if you pay attention and catch the looming disk crisis before it gets too far. The vgextend command has a converse: vgreduce. If you need to add space and don't have anywhere to physically install a new drive, you can remove one of the existing drives and replace it with a larger one. The trick is to ensure that you have enough space on a remaining drive to hold the data that is stored on the drive to be replaced.

For example, let's assume that you have a volume group that consists of four physical volumes on four physical drives, each 16 Gb in size. You want to start replacing these with 32 Gb drives. As long as you have enough space on one of the drives to hold the data contained on another, you can remove a drive using the pvmove and vgreduce commands. This will cause LVM to move any data currently stored on the target drive to another drive in the volume group, enabling you to replace it. Once the new drive is installed, you use the pvcreate and vgextend commands to gain access to your new disk real estate. If desired, you can repeat this process for the remaining three 16 Gb drives and double the size of your volume group. And with the right hardware, this all can be done without bringing down the system. While this may seem complicated, just think of the process as solving a Chinese tile puzzle, with the missing tile representing the available space.

By the way, what I have said so far about adding physical volumes to a volume group is equally applicable to i5-hosted Linux partitions. Since such partitions see network storage spaces as nothing more than another hard drive, you can continue to extend the volume groups within them by linking additional NWSSTG to the Linux partitions up to the point where you expend all of your available i5 storage. Adding to a Linux partition in this manner allows you to keep your i5 disk space available for use on either the i5/OS side or the Linux side until you need it. This allows you to be a bit less concerned about "getting it right" when you configure a partition.

Mix and Match

One interesting facet of LVM is that the physical volumes in a volume group need not be all of the same drive type. You can mix and match IDE, SCSI, and SATA drives, as well as iSCSI and other metadevices, in the same volume group. You probably wouldn't want to do this on a full-time basis for performance reasons (you would be hampering the speed of your data access by including comparatively slow devices in a volume group with speed demons). However, as a way to add space to a volume group so that you can upgrade hard drives (as I discussed in the last section), this technique can help you keep your system running.

Extolling the Virtues

So far, I have been extolling the virtues of LVM for extending existing logical volumes. You also can reduce the size of a logical volume, enabling you to "rob Peter to pay Paul" should you find yourself running out of room in one volume while having a surfeit in another. Unfortunately, reducing a logical volume can't (at this time) be performed while it is mounted in the tree. You'll need to unmount it, thus making this operation an offline procedure. On top of that, you really do need to ensure that you perform the steps in the correct order, lest you corrupt the file system. (It just isn't acceptable to reduce a logical volume to a size smaller than the file system contained within it!) I'm not going to list the steps required to reduce an LV; those are enumerated in the LVM how-to. I just want you to know that the potential is there.

Snapshot Backups

While LVM is mostly about dynamically assigning disk space, another wonderful feature is that of making snapshot backups. What this entails is creating a logical volume, using a switch that signifies that the LV is a snapshot LV, and specifying the logical volume with which it is associated. "What did he just say?" I hear you utter. Let me first show an example:

Logical volume "home_snaps" created

[root@laptop2 ~]# lvs

LV VG Attr LSize Origin Snap% Move Log Copy%

IBM VolGroup20050306 -wi-a- 4.00G

RHEL_AS4_PPC64 VolGroup20050306 -wi-a- 5.16G

home_snaps VolGroup20050306 swi-a- 512.00M log_home 0.00

iso VolGroup20050306 -wi-a- 1.00G

log_home VolGroup20050306 owi-ao 25.00G

log_opt VolGroup20050306 -wi-ao 2.00G

log_rootdir VolGroup20050306 -wi-ao 6.00G

log_tmp VolGroup20050306 -wi-ao 1.12G

log_var VolGroup20050306 -wi-ao 2.00G

usr_local VolGroup20050306 -wi-ao 256.00M

vmstorage VolGroup20050306 -wi-ao 38.22G

xfstest VolGroup20050306 -wi-a- 2.00G

In this example, I have created a logical volume labeled "home_snaps" that is associated with the "log_home" LV. I then can mount that volume into the tree, and, when I access it, I'll find all of the files that were in the original volume at the time I created the snapshot LV. Now I can use my favorite backup program to save its contents while users continue to make changes, without hassle! In this case, I will allow up to 512 Mb worth of changes before running out of room (at which point the snapshot becomes invalid); you may want to make one larger or smaller, depending on the activity in the LV with which you are working.

The usual way to use this feature is to create the snapshot LV, mount it in the system tree, and then save it. Once the backup is completed, you can delete the logical snapshot volume. With a bit of planning, you can extend that use to provide a means to restore files that users have created and (accidentally) deleted before your normal nightly backup has had a chance to capture it. This has the potential to make you a real hero to some hapless user having a bad day.

Two Kinds

There are two types of Linux users right now: those that use LVM and those that will. Logical Volume Management has grown up to become enterprise-ready, with too many nice features to ignore. Since it is appearing as the default in an increasing number of Linux distribution installations, you'll have to go out of your way to avoid it.

When you are ready to learn more, I encourage you to check out the LVM how-to. You'll find that the how-to contains not only the usual dry explanations but also a wonderful group of recipes to show you the power that is LVM. I haven't even touched on the LVM options that allow you to optimize your logical volumes for performance, but the how-to does.

That's it for this month. I hope that everyone has a wonderful holiday season and a happy new year!

Barry L. Kline is a consultant and has been developing software on various DEC and IBM midrange platforms for over 23 years. Barry discovered Linux back in the days when it was necessary to download diskette images and source code from the Internet. Since then, he has installed Linux on hundreds of machines, where it functions as servers and workstations in iSeries and Windows networks. He co-authored the book Understanding Linux Web Hosting with Don Denoncourt. Barry can be reached at

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment.

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment. TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now.

TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now. Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works.

Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works. Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include:

Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include: Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new.

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new. IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

LATEST COMMENTS

MC Press Online