"Gain a modest reputation for being unreliable and you will never be asked to do a thing."

--Paul Theroux

"Web Services." With the possible exception of "object-oriented" and maybe "grid computing," there hasn't been a more promise-laden phrase in Information Technology since "CASE." And while the concept of Web Services hasn't fizzled out like some of its peers (can you say "Extreme Programming"?), it has reached that rather tender age where it has to either put up or shut up. This is the time I like, actually, because it means we can have a frank and open discussion about both the advantages and the drawbacks of the technology without fear of being burned at the stake by the techno-evangelists who have by now leapt upon another bandwagon.

In today's column, I will discuss a couple of developments that may be the first signs of Web Services maturing from promising new technology to real-world tool: message reliability and identity management. The trick will be to describe these things without my head exploding, but that's my problem.

A Little Reminiscing

So, what was the hype about Web Services? Remember back when "Web Services" was the mantra breezing across everyone's lips? Ah yes, the idea was that computers would be able to talk to each other without programming, that they would simply exchange standardized packets of data, thereby allowing them to seamlessly interconnect. Not only that, but services would spring up across the Internet, registered via a central directory and accessible by anyone. Rather than wasting time and money with expensive programmers, you would simply reach out to the 'net whenever you needed some computing done; the Internet would become one vast reservoir of computing services available to you at the turn of a virtual spigot. Even better, you would be able to choose among the many service providers that would be fighting for your business. It was like that television commercial: "When banks compete, you win!"

Sure, there were some pesky details, like who would make sure that the algorithms worked and that the providers would be stable and that the networks would be available. Not to mention annoying little issues like Sarbanes-Oxley and exactly how much of your secure enterprise data would be sent to some unknown entity and what would happen to your business on the day you looked for a required service and nobody was providing it anymore. Those were mere minutiae, factual frippery spoiling a beautiful theory. The fact that people with a lot of experience in the industry were calling Web Services "EDI for XML" and pointing out how long it took EDI to become established was simply a case of sour grapes.

What's Happening Today?

Well, it's now a couple of years later, and Web Services have not yet replaced programmers. But a couple niches are being filled: For example, most stock trading functions are available, from price quotes to lists of insider trades. Free Web Services exist for things like address lookups, while other vendors offer for-fee services ranging from currency conversion to full-blown accounting packages. For example, ObjAcct is quite interesting. As far as I can tell, it's a full-blown accounting system with a Web Services interface that you can "embed" in your own applications. Whether or not these folks make it, the concept of providing access to your enterprise application as a Web Service seems to me to be the next evolutionary step past the idea of Application Service Provider (ASP). Whereas an ASP provides users with access to a centralized application, a Web Services programming interface (WSPI?) provides a programmatic interface from other applications.

If you'd like to peruse the Web Services that are currently available, you might want to visit a few of the larger repositories. For example, RemoteMethods.com has a list of several hundred Web Services from various Web Service Providers (WSPs), while StrikeIron's Global Directory lists over 1,500 (a couple hundred of them are commercial services from StrikeIron itself). WebServiceX.net is an interesting site that hosts over 70 free services.

Other than this, though, the primary Web Services providers are large companies that want standardized communications not only with their suppliers and customers but also within their own organizations. The perfect example is General Motors, which is basically converting its former EDI model over to Web Services. Rather than sour grapes, EDI replacement is actually the focus of Web Services at this point.

The State of the Technology

So now we come to the sticky part. Without going too deeply into the political infighting surrounding the entire Web Services concept (Microsoft and IBM, joined in a marriage of convenience, are pitted against the rest of the industry, and this struggle has resulted in no fewer than four organizations providing "assistance" with the standards process), it's clear that there is currently no real standard for Web Services and that in fact the industry is becoming more, not less, fractionalized. Here is just a single example: IBM has two separate protocols, Web Services Reliable Messaging (WS-RM) and Web Services Reliability (WS-R), both of which attempt to provide the same basic service through two distinctly different mechanisms. Even though IBM is a founding member of OASIS (the Organization for the Advancement of Structured Information Systems), only one of these protocols, WS-R, is an OASIS standard. And that's not even the confusing part! Here's the bit that makes it nearly surreal: OASIS calls WS-R "Web Services Reliable Messaging." My head hurts.

Where's the SOAP?

Let's consider one more side issue before we get into these new technologies. There is an ongoing struggle between the Simple Object Access Protocol (SOAP) camp and the rest of the world, "rest" being an entirely apropos word since the competing technology is Representational State Transfer, or REST. REST is to SOAP as JavaServer Pages (JSPs) are to Struts, or as Plain Old Java Objects (POJOs) are to Enterprise Java Beans (EJBs). REST is fast, lightweight, easy to use, and easy to program while SOAP is slow, bloated, and difficult to implement without specialized programming tools. As an example, the following listing shows the SOAP equivalent of an acknowledgement (or ACK, which back in the day was a single character):

http://www.ibm.com/guid/a8f7151a091b50a42b38e04437774e11

That's not the whole SOAP message, either. Note the four or five places where an ellipsis is used to indicate more required information. EJBs crumbled under their own weight, and Struts has been replaced (albeit by an even more cumbersome and bloated standard). While SOAP will not die, being supported as it is by IBM and Microsoft, it is still not the global standard and probably won't be the interface of choice any time soon. In fact, one of the largest providers of Web Services, Amazon.com, allows both interfaces, and the last time I checked, over 80% of the requests were REST rather than SOAP.

However, this is edging into the technical side of things, which I hope to cover in a bit more detail in an upcoming issue of iApplication Designer. For the moment, though, the only "official" standards on reliability and security are the OASIS standards, and OASIS is built on SOAP, so let's assume that SOAP is part of your infrastructure.

The Problems and the Proposed Solutions

The big problems in any sort of distributed architecture are straightforward and are as old as the concept of client/server computing, which in turn is nearly as old as data processing itself. Looking at it from the client/server standpoint then, there are two age-old questions. First, the server wants to know whether the client is really who he says he is. Second, the client wants to know whether the server actually processed his request.

Security

Simple authentication is available for just about any Web communication. You see this whenever you go to a Web site and are challenged with a standard HTTP authentication prompt (although those seem to be being replaced with regular Web pages asking for sign-on information). However, that's really the "human on a browser" type of authentication, and it has limitations. For the most part, those limitations can be thought of as confining the width of the transaction.

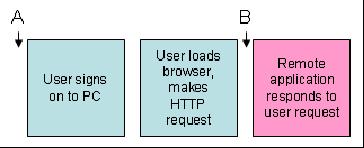

Figure 1: This is the standard human-oriented Web authentication system. (Click images to enlarge.)

Figure 1 shows the standard HTTP authentication scenario used when surfing the Web. The user signs on to the PC and then loads the browser. The browser makes HTTP requests to a remote application, which responds to the request. If authentication is required, it happens at point B and requires human intervention. If multiple requests require authentication, the user would need to key in a user ID and password at each challenge. But since surfing involves getting an entire page of data for each request, even the worst case would typically never require more than one authentication per page. In this scenario, authentication challenges aren't such a burden.

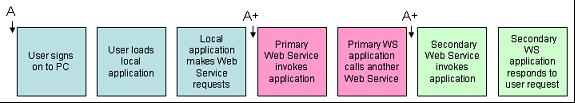

Figure 2: In a more automated environment, authentication is propagated along the transaction.

But in a more complex environment, the Web is used less as a human-activated query tool than as a computer-to-computer communications device. A single action by a user on the local workstation could invoke one or more transactions from different computers on the network, which could in turn invoke transactions on yet other computers. Figure 2 shows how the initial authentication created at sign-on time (A) is propagated down the chain to various subsidiary computers (A+). At each point, some sort of token is automatically exchanged between layers, removing the need to go back to the requester to get authentication.

Unlike a standard browser interface, without some method of replicating authentication, the user would be inundated with challenges, including challenges from computers with whom the initial user has no account, making it impossible to provide credentials. So what began as a simple exercise in authenticating a user now becomes a complex task involving authentication, single sign-on, and federation of trust. It is this level of complexity that requires a specification higher than the simple REST architecture, and this is what the various Web Services organizations are trying to address. The WS-Secure, WS-Trust, and WS-Federation standards all play key roles in the conversation depicted in Figure 2.

Reliability Becomes Key

As we have already noted, in the original Web design, the client was always considered to be a human being using a browser. Common sense and the Refresh button were pretty much all that was required to handle any communications problems. And even then you often saw this message on your browser screen: "Do not hit Refresh on this page or your account may be billed twice!" Not particularly comforting, but unless the user was a glutton for punishment, chances were you wouldn't get more than the rare double-post. And even if that did happen, there usually was a human being involved with a vested interest in verifying the transaction.

One of the truths of automation, though, is that your procedures need to be very good: When you have a flaw in your process, the computer can execute that flaw literally hundreds of times a second, and there is no human oversight at the individual transaction level. These flaws can have very serious negative effects. Think about it: If a cancel order request doesn't make it through the system, an unexpected order can be executed. This can result in anything from a double billing for the latest Nickelback ring tone to a delivery of a train car load of hog bellies to an unsuspecting commodities trader.

The standard HTTP protocol has no way of handling this problem, which is why SOAP over HTTP has some inherent difficulties. Although the mechanics of reliable messaging have long been established, they simply weren't incorporated into the simple put/get design of HTTP. HTTP can at best return a response that indicates success or failure, but the response doesn't indicate whether the transaction was successful; it indicates only the status of the communication between the browser and the HTTP server. For example, even though the HTTP server says the transaction was successful, that doesn't mean the server actually completed the transaction embedded in the request. Instead, you need to build some sort of acknowledgement into your application, and the acknowledgement must be sent back to the requester.

This is particularly important for multi-part messages. When a message is so large that it needs to be broken into multiple pieces, it is important to have a way of reliably segmenting a message and reassembling it at the other end of the communication. TCP/IP already has a method to provide reliable transport at the packet level; this concept needs to be reapplied at the larger message level of the Web Service request and response.

Even worse, a failure in the HTTP request doesn't necessarily mean that the message failed! The request could have made it all the way to the server, and then some sort of temporary network problem failed in receiving a response from the serving application. The message could have been processed, but the HTTP server would tell the requester that the request failed. This could cause the requester to re-send the request, which could result in a double posting. Obviously, some method of avoiding duplication needs to be put in place, as well a way of requesting that messages be re-sent.

Note that one possibility to avoid this entire issue is to remove HTTP from the equation and instead use another wire protocol for sending Web Services information. This could remove the Internet from the equation entirely. And for company-specific Web Services (such as communicating with GM), this might actually be an option. I suppose for large corporate providers you could dial in (or otherwise connect) directly to some sort of company-specific Web Services network. But in order to provide this level of interaction between unrelated companies without building an entire alternate communications infrastructure, the Internet and HTTP will probably still need to be the primary transport layer.

The WS-Reliable Messaging standard is the primary player in this effort, and like all of the OASIS standards, is based on SOAP as the underlying message layout.

Conclusions

Right now, there is a technical (and somewhat philosophical) debate being waged about the individual merits of SOAP and REST because both can provide access to basic Web Services. REST is much simpler and does not rely on proprietary tooling. (Make no mistake: The SOAP standards are open, but using them requires proprietary tools.) On the other hand, the big players are building a framework on SOAP that incorporates all the client/server capabilities we've learned over the past four decades of computing, things that a larger vision of completely distributed applications depends upon. These features cannot be added to the HTTP protocol, and the very nature of REST (that is, reliance on underlying services) means that REST will probably never support them. That being the case, there really is no alternative to SOAP and the OASIS Web Services protocols for building complex multi-computer applications using XML over HTTP.

On the other hand, the fact that these features are being hammered out, even if it is only in the SOAP realm, means that the Web Services architecture may finally be able to take its place as a viable replacement not only for EDI and other batch processing designs, but for more dynamic online transaction processing applications. My only disappointment will be that the cumbersome SOAP standard will be dragged along with it.

Joe Pluta is the founder and chief architect of Pluta Brothers Design, Inc. He has been working in the field since the late 1970s and has made a career of extending the IBM midrange, starting back in the days of the IBM System/3. Joe has used WebSphere extensively, especially as the base for PSC/400, the only product that can move your legacy systems to the Web using simple green-screen commands. Joe is also the author of E-Deployment: The Fastest Path to the Web, Eclipse: Step by Step, and WDSC: Step by Step. He will be speaking at Local User Group meetings around the country in March, April, May, and June; you can reach him at

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment.

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment. TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now.

TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now. Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works.

Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works. Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include:

Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include: Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new.

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new. IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

LATEST COMMENTS

MC Press Online