DB2 UDB for iSeries was the first member of the DB2 family that implemented SQL Procedural Language (SQL PL). The support for SQL stored procedures was first shipped in OS/400 V4R2. Since then, every new release has delivered a number of enhancements and improved functionality, so over the years, the iSeries implementation of SQL PL has matured and become a robust programming language alternative for database developers.

The SQL PL, which is based on the ISO/ANSI/IEC SQL Persistent Stored Modules (SQL/PSM) specification, allows developers to write routines and triggers that combine SQL access with flow control structures typical for a procedural language. This type of procedural SQL scripting language proved to be very popular among database programmers. In fact, all major database vendors offer a version of a scripting language with functionality similar to that of DB2 SQL PL. For example, Oracle supports PL/SQL while Microsoft and Sybase use Transact-SQL. Note, however, that only DB2 implements a standard compliant scripting language. Other vendors use their proprietary dialects mainly because they supported SQL procedural languages before the SQL/PSM standard was published.

The support for SQL PL on iSeries has recently been instrumental in several large porting projects in which hundreds of SQL stored procedures, functions, and triggers were successfully ported from other database platforms to iSeries. To facilitate this work, the Rochester development lab has shipped a number of significant enhancements aimed at improving the SQL PL functionality. In this article, I highlight the new functions and cover non-trivial programming techniques that hopefully will increase your SQL PL programming efficiency. Here's the list of topics I describe in greater detail:

- Condition handlers in nested compound statements

- Implicit name qualification (unqualified database object names)

- A technique to avoid orphaned data space locks caused by cursors left open

Condition Handlers in Nested Compound Statements

According to the SQL/PSM specification, an SQL routine (that is, a stored procedure or a function) consists of a single SQL statement. Luckily, the specification also introduces the concept of a compound statement. A compound statement consists of a BEGIN/END block and any number of SQL statements contained within the block. The body of an SQL routine is, in fact, a compound statement. Since V5R1, DB2 UDB for iSeries has supported nested compound statements, which are typically used in complex routines to scope constructs such as variables, cursor declarations, and condition handlers. Only the constructs that were defined within the same or enclosing compound statements are visible. In other words, SQL statements within a compound statement may not be able to refer to constructs declared within another compound statement. The general structure of a compound statement is shown below. Note that the various constructs must be ordered as listed.

<variable declarations>

<cursor declarations>

<condition handler declarations>

<SQL statement list/procedure logic>

END

The SQL PL language elements are well-covered in existing publications. Please refer to the Additional Info section below for a list of relevant publications.

Here, I will focus on condition handlers in nested compound statements. A condition handler is an SQL statement that is executed when an exception or completion condition occurs within the body of a compound statement. The action specified in a handler can be any SQL statement, including a compound statement. The scope of a handler is limited to the compound statement in which it is defined. There are three types of condition handlers:

- Exit--After the SQL statement in the handler is successfully executed, control is returned to the end of the compound statement that defined the handler.

- Continue--After the SQL statement in the handler is successfully executed, control is returned to the SQL statement following the one that raised the exception.

- Undo--Similar to EXIT handlers, control is returned to the end of the compound statement that defined the handler. In addition, any statements executed within the compound statement will be rolled back. Note that the UNDO handlers can be defined only in an ATOMIC compound statement.

So what are the conditions that can cause a handler to be invoked? DB2 SQL PL defines three general conditions that are associated with different SQLSTATEs. An SQLSTATE is a five-character string contained in the DB2 Communications Area (DB2 CA). DB2 runtime sets this value each time an SQL statement is executed. SQLSTATEs are consistent across all DB2 platforms. Here's a short description for the three general conditions:

- SQLEXCEPTION specifies that the handler is invoked when an SQL exception occurs. This corresponds to an SQLSTATE with a class value other than '00', '01', and '02'. The SQLSTATE class is defined by its first two characters.

- SQLWARNING specifies that the handler is invoked when an SQL warning occurs. This corresponds to SQLSTATE class '01'.

- NOT FOUND specifies that the handler is invoked when a NOT FOUND condition occurs. This corresponds to SQLSTATE class '02'.

In addition, it is also possible to provide handlers for a specific condition. For example, a developer may declare a handler for SQLSTATE '02505', which corresponds to the duplicate key exception. Here's a code snippet that illustrates the concepts I discussed in this section:

BEGIN

GET DIAGNOSTICS EXCEPTION 1 SQLERRM = MESSAGE_TEXT ;

INSERT INTO jm_debug ( SQLTEXT, T1 ) VALUES ( ' Level2 - Exit Handler

for DUPLICATE_KEY: Error message: ' || SQLERRM, CURRENT TIMESTAMP) WITH NC;

END ;

The code fragment shows an exit handler for a specific SQL exception. It is invoked when a duplicate key violation occurs in the compound statement that contains the handler. Actually, a better programming technique would be to declare a condition name for a specific SQLSTATE to avoid hard-coding a particular SQLSTATE on a handler declaration. That way, the source code becomes easier to read and maintain. Consider the following code fragment:

DECLARE EXIT HANDLER FOR DUPLICATE_KEY ...

So the condition declaration associates a meaningful, descriptive name with the SQLSTATE that it represents.

DB2 SQL PL also provides two programming constructs that developers can use to throw exceptions and warnings: SIGNAL and RESIGNAL. The SIGNAL statement is used to signal an error or warning condition. It causes an error or warning to be returned with the specified SQLSTATE, along with the message text. The RESIGNAL statement resignals an exception condition, and it can be coded only as part of the condition handler. In the simplest form, RESIGNAL has no additional parameters and is used to reissue the same condition that caused the handler to be invoked.

Now, armed with a basic understanding of compound statements and condition handlers, let's examine a stored procedure called p_nested_test(). The procedure manipulates data in a sample table called COFFEES. First, the routine calculates the average price of coffee brands contained in the table and then inserts a new row. However, the main purpose of the procedure is to illustrate the behavior of condition handlers in nested compound statements. Here's the source code listing:

OUT P_ERROR_IND_OUT CHARACTER(1) )

LANGUAGE SQL

SPECIFIC P_NESTED_TEST

Level_1 :

BEGIN -- Main Procedure Body; Level-1 Compound Statement

DECLARE V_REF_CURSOR_TEXT VARCHAR ( 1024 ) ;

DECLARE V_SQL_STMT_EXEC1 VARCHAR ( 1024 ) ;

DECLARE SQLERRM VARCHAR ( 4000 ) DEFAULT '' ;

DECLARE V_AVG_PRICE DOUBLE PRECISION ;

DECLARE V_ROWS_INSERTED INTEGER DEFAULT 0 ;

DECLARE OBJECT_NOT_FOUND CONDITION FOR SQLSTATE '42704' ;

DECLARE COFFEES_QUERY_FAILED CONDITION FOR SQLSTATE '70010' ;

DECLARE COFFEES_UNKNOWN_AVG_PRICE CONDITION FOR SQLSTATE '70019' ;

DECLARE COFFEES_INSERT_FAILED CONDITION FOR SQLSTATE '70020' ;

DECLARE C_GET_COFFEES CURSOR FOR V_CUR_STMT ;

-- Exit handler scoped to the main procedure body

DECLARE EXIT HANDLER FOR SQLEXCEPTION --[2.3] & [3.2}

BEGIN

GET DIAGNOSTICS EXCEPTION 1 SQLERRM = MESSAGE_TEXT ;

SET P_ERROR_IND_OUT = 'Y' ;

INSERT INTO JM_DEBUG ( SQLTEXT )

VALUES ( 'Level_1-Exit Handler for sqlexception: Message : '

|| SQLERRM ) WITH NC ;

RESIGNAL ;

END ;

-- Level_1 compound statement body starts here

SET V_REF_CURSOR_TEXT = 'SELECT avg(price) FROM ' || TRIM ( P_TABLE_NAME ) ;

PREPARE V_CUR_STMT FROM V_REF_CURSOR_TEXT ;

INSERT INTO JM_DEBUG ( SQLTEXT )

VALUES ( 'Level_1-Main Procedure Body: V_CUR_STMT prepared' );

-- Level_2_1 compound statement

Level_2_1:

BEGIN

-- exit handler scoped to compound statement Level_2_1

DECLARE EXIT HANDLER FOR SQLEXCEPTION --[2.2]

BEGIN

GET DIAGNOSTICS EXCEPTION 1 SQLERRM = MESSAGE_TEXT ;

INSERT INTO JM_DEBUG ( SQLTEXT )

VALUES (' Level_2_1-Exit Handler for Select: Message: '

|| SQLERRM ) WITH NC ;

SIGNAL COFFEES_QUERY_FAILED SET MESSAGE_TEXT = SQLERRM ;

END ;

-- continue handler scoped to scoped to compound statement Level_2_1

DECLARE CONTINUE HANDLER FOR COFFEES_UNKNOWN_AVG_PRICE --[1.2]

BEGIN

GET DIAGNOSTICS EXCEPTION 1 SQLERRM = MESSAGE_TEXT ;

INSERT INTO JM_DEBUG ( SQLTEXT )

VALUES (' Level_2_1-Handler for SQLSTATE 70019: Message: '

|| SQLERRM ) WITH NC ;

SET V_AVG_PRICE = 0.0 ;

END ;

-- Level_2_1 compound statement body starts here

OPEN C_GET_COFFEES ; --[2.1]

FETCH C_GET_COFFEES INTO V_AVG_PRICE ;

CLOSE C_GET_COFFEES ;

IF V_AVG_PRICE IS NULL THEN --[1.1]

SIGNAL COFFEES_UNKNOWN_AVG_PRICE

SET MESSAGE_TEXT = 'Unknown avg price of coffee.' ;

END IF ;

INSERT INTO JM_DEBUG ( SQLTEXT ) --[1.3]

VALUES ( ' Level_2_1 - Body: v_avg_price = '

|| TRIM ( CHAR ( V_AVG_PRICE ) ) ) WITH NC ;

END Level_2_1;

-- Level_1 body resumes here

INSERT INTO JM_DEBUG(SQLTEXT)

VALUES ( 'Level_1-Resuming processing after end of Level_2_1 compound statement.' ) WITH NC ;

SET V_SQL_STMT_EXEC1 = 'INSERT INTO ' || TRIM ( P_TABLE_NAME )

|| ' VALUES(10, ''Colombian Supreme'', 10, 9.95, 1000, 1000)' ;

Level_2_2:

BEGIN

-- exit handler scoped to compound statement Level_2_1

DECLARE EXIT HANDLER FOR OBJECT_NOT_FOUND

BEGIN

GET DIAGNOSTICS EXCEPTION 1 SQLERRM = MESSAGE_TEXT ;

INSERT INTO JM_DEBUG (SQLTEXT)

VALUES ( ' Level_2-2-Handler for Insert: Message: '

|| SQLERRM ) WITH NC ;

SIGNAL COFFEES_INSERT_FAILED SET MESSAGE_TEXT = SQLERRM ;

END;

-- Level_2_2 compound statement body starts here

EXECUTE IMMEDIATE V_SQL_STMT_EXEC1 ; --[3.1]

GET DIAGNOSTICS V_ROWS_INSERTED = ROW_COUNT ;

INSERT INTO JM_DEBUG (SQLTEXT)

VALUES ( ' Level_2-2-Main Body: ' || TRIM(CHAR(V_ROWS_INSERTED))

|| ' row(s) inserted in COFFEES.' ) WITH NC ;

END Level_2_2;

-- Level_1 body resumes here

INSERT INTO JM_DEBUG (SQLTEXT)

VALUES ( 'Level_1-Resuming processing after end of Level_2_2 compound statement.' ) WITH NC ;

SET P_ERROR_IND_OUT = 'N' ;

END level_1;

The procedure contains four handlers:

- An exit handler defined in Level_1 compound statement--The scope of this handler is the entire stored procedure body.

- An exit and a continue handler defined in Level_2_1 compound statement--The scope of these handlers is limited to the compound statement in which they were declared.

- An exit handler defined in Level_2_2 compound statement--The scope of this handler is limited to the compound statement in which it was declared. In other words, it is not visible to the statements contained in Level_2_1.

To facilitate the analysis, I trace the flow of the control during the execution by writing out messages into a separate table called jm_debug. This is a fairly common debugging technique used by SQL PL developers.

Let's consider several scenarios for the stored procedure execution to see how various programming constructs interact with each other:

Test Case 1: Suppose the COFFEE table initially contains no rows, and the procedure is called with the following parameters:

The first parameter is an input value, and it contains the name of the table to be manipulated. The second parameter is an output parameter, and it returns an error indicator value ('N' for no errors, and 'Y' when errors occurred). The invocation completes successfully with error indicator set to 'N'. The jm_debug contains the following entries:

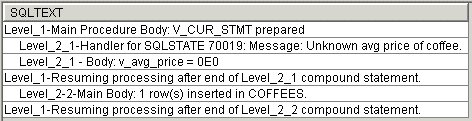

Figure 1: These are the debug messages for Test Case 1. (Click images to enlarge.)

The execution proceeds successfully until the IF statement at [1.1] is reached in compound statement Level_2_1. Since the COFFEES table is empty at this time, the V_AVG_PRICE variable is set to NULL (unknown). It causes the COFFEES_UNKNOWN_AVG_PRICE signal to be fired. This error condition corresponds to a custom SQLSTATE of '70019' (see the condition declarations section in the main stored procedure body). The DB2 runtime first tries to locate a condition handler for this particular condition within the Level_2_1 compound statement. It finds a continue handler at [1.2]. The handler is invoked, and the V_AVG_PRICE is set. The control is returned to statement [1.3], which is the next statement after the SIGNAL statement that raised the exception. The execution successfully continues until the end of the main procedure body is reached. Note that compound statement Level_2_2 inserts a new row into COFFEES.

This example illustrates how to use a custom SQLSTATE and a continue handler to deal with user-defined error conditions in SQL PL. User-defined errors are certain conditions in an application that are defined as errors by the business logic rather than errors generated by DB2 UDB or the operating system. In our case, the business rule is that the unknown value of the average coffee price is disallowed. Refer to the "Improved Handling of Stored Procedure User-Defined Errors" white paper to learn more about user-defined errors in stored procedures.

Test Case 2: This time, I call the stored procedure with an intentionally corrupted first parameter:

The stored procedure returns with the error messages shown below:

Vendor Code: -438

Message: [SQL0438] CO$$EES in SQLTUTOR type *FILE not found.

The jm_debug contains the following entries:

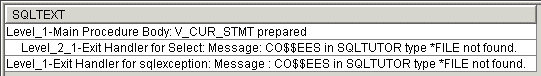

Figure 1: These are the debug messages for Test Case 2.

After invocation, the execution proceeds until the OPEN cursor statement is reached at [2.1]. Since the table name is corrupted, the DB2 runtime fails to find the corresponding table object and it throws an SQL exception with SQLSTATE '42704', indicating that the table (file) was not found. Since the Level_2_1 compound statement does not contain a handler for that specific condition, a general handler for all exceptions is invoked at [2.2]. The handler writes a debug row and resignals the error with the COFFEES_QUERY_FAILED condition mapped to custom SQLSTATE '70010'. The control is transferred to the end of the compound statement Level_2_1 and thus returns to the main procedure body. The error condition signaled in the Level_2_1 is pending, so now the runtime tries to locate an appropriate handler in the Level_1 compound statement (main procedure body). There is no handler for a specific condition COFFEES_QUERY_FAILED, but the runtime finds the general exception handler at [2.3]. After writing a debug message into jm_debug, the handler resignals the error condition that is returned to the caller and manifests itself in the error messages shown above.

Test Case 3: Finally, the stored procedure is called again with the following parameters:

The stored procedure returns with the error messages shown below:

Vendor Code: -803

Message: [SQL0803] Duplicate key value specified.

The jm_debug contains the following entries:

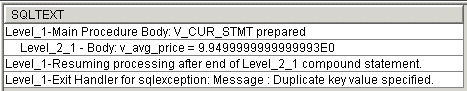

Figure 2: These are the debug messages for Test Case 3.

After invocation, the execution proceeds until the EXECUTE IMMEDIATE statement is reached at [3.1]. The COFFEES table's first column has been defined as the primary key. The row with the key value 10 in the first column already exists because it was inserted when Test Case 1 was run. Therefore, DB2 runtime throws a duplicate key exception that corresponds to SQLSTATE '23505'. First, the DB2 runtime tries to locate a handler for this specific condition in the compound statement Level_2_2. There is no such a handler. Then, the runtime searches for a general handler, which also does not exist in Level_2_2. Control returns to the main procedure body with the error condition pending. Now, the runtime locates the general handler for SQL exceptions and invokes it at [3.2]. After writing a debug message, the handler resignals the original error thrown in the inner Level_2_2 compound statement.

Implicit Schema Qualification for Static and Dynamic SQL Statements in SQL Stored Procedures

On DB2 UDB for iSeries, unqualified alias, constraint, external program, index, nodegroup, package, sequence, table, trigger, and view names are implicitly qualified by the default schema. In the past, SQL stored procedures provided no compile-time options to specify the value of a default schema, so the system used the following rules to resolve unqualified names:

- For static SQL statements, the default schema depends on the naming convention: For SQL naming (*SQL), the default schema is set to the authorization ID (user profile name) in effect when the stored procedure is created. For system naming (*SYS), the default schema is set to the job's library list (*LIBL).

- For dynamic SQL statements, the default schema depends on the naming convention: For SQL naming (*SQL), the default schema is set to the authorization ID (user profile name) in effect when the stored procedure is executed. For system naming (*SYS), the default schema is set to the job's library list (*LIBL).

Note the important difference in behavior for static and dynamic statements when SQL naming is used. To illustrate the default behavior, let's consider the following simple stored procedure:

LANGUAGE SQL

SPECIFIC testdynsql

MODIFIES SQL DATA

MainBody: BEGIN

DECLARE STMT VARCHAR ( 128 ) ;

DECLARE SQLERRM VARCHAR ( 256 ) DEFAULT '';

DECLARE ErrorIndicator CHAR(1) DEFAULT 'N';

Static_Stmt: BEGIN

DECLARE CONTINUE HANDLER FOR SQLEXCEPTION --[2]

BEGIN

GET DIAGNOSTICS EXCEPTION 1 SQLERRM = MESSAGE_TEXT ;

INSERT INTO jm_debug ( SQLTEXT )

VALUES ( 'Static Statement Failed: ' || SQLERRM ) WITH NC;

SET ErrorIndicator = 'Y';

END;

INSERT INTO jm_debug VALUES ( 'Static Statement Succeeded.' ); --[1]

END Static_Stmt;

Dynamic_Statement: BEGIN

DECLARE CONTINUE HANDLER FOR SQLEXCEPTION --[4]

BEGIN

GET DIAGNOSTICS EXCEPTION 1 SQLERRM = MESSAGE_TEXT ;

INSERT INTO jm_debug ( SQLTEXT )

VALUES ( 'Dynamic Statement Failed: ' || SQLERRM ) WITH NC;

SET ErrorIndicator = 'Y';

END;

SET STMT = 'INSERT INTO jm_debug VALUES(''Dynamic Statement Succeeded.'')';

PREPARE S1 FROM STMT ;

EXECUTE S1; --[3]

END Dynamic_Stmt;

IF ErrorIndicator = 'Y' THEN

SIGNAL SQLSTATE '70000' SET

message_text='There were errors. Check jm_debug for details.';

END IF;

END MainBody;

The stored procedure attempts to insert two rows into the jm_debug table. First, at [1] a static insert SQL statement is used. Note that the table name is unqualified, so the task to resolve it to a table object rests with DB2. The errors in the Static_Stmt compound statement are intercepted and handled by the continue handler at [2]. A dynamic SQL statement at [3] is used to insert a second row. The errors are handled by the continue handler in the Dynamic_Stmt compound statement at [4].

So how does DB2 resolve the unqualified reference to the jm_debug table if no default schema is set? Here's one possible scenario:

Test Case 4: The stored procedure is created by a user called DB2ADMIN. In other words, the CREATE PROCEDURE statement is executed under authorization ID DB2ADMIN. The jm_debug table resides in the DB2ADMIN schema. The naming convention in effect is *SQL. Another user called DB2GURU invokes the stored procedure. For simplicity, let's assume that DB2GURU has all object (*ALLOBJ) authority. Before the stored procedure call is executed, DB2GURU sets the PATH to DB2ADMIN so that the stored procedure can be found:

CALL TESTDYNSQL();

The stored procedure returns the following error messages:

Vendor Code: -438

Message: [SQL0438] There were errors. Check jm_debug for details.

The jm_debug contains the following entries:

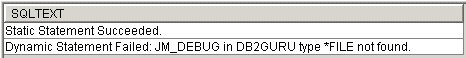

Figure 4: These are the debug messages for Test Case 4.

As expected, the static statement succeeds, because DB2 uses the authorization ID in effect at creation time to resolve the unqualified jm_debug table name. The dynamic statement, however, fails. This time, DB2 uses the runtime authorization ID to resolve the unqualified name. Since there is no jm_debug table in SQL schema named DB2GURU, DB2 signals an SQL exception.

To eliminate the error, DB2GURU could use the SET SCHEMA statement to set the default schema for all dynamic statements run in a given session:

Another approach would be to hardcode the SET SCHEMA statement in the stored procedure. In some environments, however, the application developer wants to be able to determine the default schema for both static and dynamic statements at deployment time rather than hardcoding it in the application code or runtime settings. This requirement becomes especially important for applications that support heterogeneous databases. For example, on Oracle, unqualified names in both static and dynamic statements are, by default, resolved to the creator's authorization ID.

Recently, SQL stored procedure support on iSeries has been enhanced to accommodate Oracle-like behavior for implicit qualification (see the Software Prerequisites section for a list of necessary PTFs to enable the new functionality). Two new options that have been added to the SET OPTION statement can be used on the CREATE PROCEDURE statement:

- Default collection (DFTRDBCOL) specifies the name of the schema identifier used for the unqualified names of the tables, views, indexes, and SQL packages. This parameter applies only to static SQL statements.

- Dynamic default collection (DYNDFTCOL) specifies whether the default schema name specified for the DFTRDBCOL parameter is also used for dynamic statements.

By default, these two attributes are set to the following values: DFTRDBCOL(*NONE) and DYNDFTCOL(*NO). This corresponds to the default DB2 behavior described in Test Case 4. Specifically, DFTRDBCOL(*NONE) means that no default schema has been set, so the rules outlined at the beginning of this section are in effect.

Test Case 5: With the new support, I can add the following SET OPTION statement to the CREATE PROCEDURE statement:

LANGUAGE SQL

SPECIFIC testdynsql

MODIFIES SQL DATA

SET OPTION DYNDFTCOL=*YES

BEGIN

...

END

In order to avoid hardcoding, the DFTRDBCOL keyword has been deliberately omitted. The DFTRDBCOL is inherited from the current environment. So at deployment time, when the target default schema has been determined, the system administrator sets the default schema to, for example, DB2ADMIN and executes the script to create the stored procedure. As a result, the program object associated with the stored procedure has the following attributes: DFTRDBCOL=DB2ADMIN and DYNDFTCOL=*YES. This means that at runtime both static and dynamic unqualified statements will be implicitly qualified with DB2ADMIN.

Let's see how the modified stored procedure works. After opening a new connection, DB2GURU calls the stored procedure as shown below:

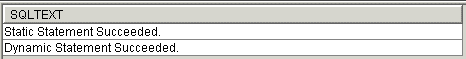

This time, there is no need to use SET SCHEMA or change the default schema setting in the connection environment. The stored procedure completes successfully. The jm_debug contains the following entries:

Figure 3: These are the debug messages for Test Case 5.

Sometimes, even experienced iSeries developers tend to confuse the ability to resolve unqualified names to database objects with authority required to actually access them. As described in this section, the implicit qualification is controlled by DFTRDBCOL and DYNDFTCOL while the access authority is managed by USRPRF and DYNUSRPRF attributes. Here's a short description of the latter two parameters:

- User profile (USRPRF) specifies the user profile that is used when the compiled routine (or SQL package) is run, including the authority to each object in static SQL statements. The profile of either the *OWNER or the *USER is used to control access to objects.

- Dynamic user profile (DYNUSRPRF) specifies the user profile used for dynamic SQL statements. The profile of either the program's user or the program's owner is used to control access to objects.

By default, these parameters are set to USRPRF(*NAMING) and DYNUSRPRF(*USER).

I used the *SQL naming convention in Test Case 5, which means that the *OWNER (DB2ADMIN) profile was used for the static statement and the *USER (DB2GURU) profile was used for the dynamic statement. So Test Case 5 executed successfully only because DB2GURU had *ALLOBJ authority; therefore, the access to jm_debug in the dynamic statement was implicitly granted. Typically, application users run under user profiles with limited authority. Here, the DYNUSRPRF comes in handy. I can specify DYNUSRPRF(*OWNER) on the CREATE PROCEDURE statement so that the dynamic statement also runs under the *OWNER (DB2ADMIN) user profile. That way, I can make sure that the statement will not fail due to the lack of access authority.

Here's the final CREATE PROCEDURE statement that takes care of both implicit qualification and access authority:

LANGUAGE SQL

SPECIFIC testdynsql

MODIFIES SQL DATA

SET OPTION DYNDFTCOL=*YES, DYNUSRPRF=*OWNER

BEGIN

...

END

Note: Remember to grant the execute privilege on the stored procedure to the user who needs to invoke it. For example:

In addition, user DB2USR needs at least the *USE authority to the library (schema) that contains the stored procedure's *PGM object. Here's a sample CL command:

Avoid Orphaned Data Space Locks Caused by Cursors Left Open

The open cursor results in a read lock held over the data space. This lock is needed to preserve the integrity of the open cursor structure that points to that data space. The open cursor is not implicitly closed upon the return from a stored procedure. There are two default compile/link time parameters that are responsible for this behavior:

- CLOSQLCSR(*ENDACTGRP)--This parameter means that the open cursors are closed when the activation group ends.

- ACTGRP(*CALLER)--This parameter means that a stored procedure runs within the same activation group as the calling process. This is required for performance reasons. Consequently, the cursors that are not closed in a stored procedure will be still open upon return to the caller process (job).

Currently, these parameters cannot be easily changed for an SQL stored procedure.

Under certain circumstances, the described behavior can result in locks that persist as long as a given job (activation group) is active. Let's suppose we executed the following procedure from the SQL Script utility:

language sql

p1: begin

declare v_cof_name varchar(32);

declare c1 cursor for

SELECT cof_name FROM COFFEES;

declare exit handler for sqlexception

resignal ; --[4]

OPEN c1; --[1]

FETCH c1 into v_cof_name;

COMMIT HOLD; --[2]

SIGNAL SQLSTATE '70000'

set message_text='Exit before cursor closed';--[3]

CLOSE c1;

END p1;

In the above example, cursor c1 is opened at [1]. At [2], the transaction is committed with hold so that the cursors are not closed. Then at [3], an exception is signaled so that control is transferred to the exit handler at [4]. The exit handler just resignals the exception, and the procedure returns to the caller. The cursor c1 is still open. The Run SQL Script utility does not allow you to explicitly close this cursor. To get rid of the lock, you need to close the connection, which releases the QZDASOINIT job that is associated with this session.

To avoid this situation, you need to explicitly close the cursor in the stored procedure. The following code illustrates how this can be achieved with the nested error handler:

language sql

p1: begin

declare v_cof_name varchar(32);

declare c1 cursor for

SELECT cof_name FROM COFFEES;

declare exit handler for sqlexception --[1]

begin

declare i int;

declare continue handler for sqlstate '24501' --[3]

set i =0;

close c1; --[2]

resignal;

end;

OPEN c1;

FETCH c1 into v_cof_name;

COMMIT HOLD;

CLOSE c1;

SIGNAL SQLSTATE '70000'

set message_text='Exit after cursor closed';

END p1;

In the above code, the handler intercepts the signal at [1]. At [2], we attempt to close the cursor. If the cursor is open, the close succeeds and the resignal is executed. If the cursor is already closed, the statement at [2] throws an SQL exception with SQL state of '24501'. This is intercepted by the inner continue handler at [3]. After basically ignoring the exception, the control returns to the next statement after [2], which is resignal. The SET statement in the nested handler is used as a workaround for a "do nothing" statement that currently does not exist in SQL PL.

Software Prerequisites

For maximum database server stability, you should install the latest database group PTF (SF99502 for V5R2 or SF99503 for V5R3) on the iSeries server. In addition, the following table lists the PTFs required for the DB2 SQL PL enhancements and improvements discussed in this article:

|

PTFs Required for the DB2 SQL PL Enhancements

|

||

|

Enhancement

|

OS/400 V5R2

|

i5/OS V5R3

|

|

Implicit qualification

(DFTRDBCOL, DYNDFTCOL options) |

SI16196, SI16197,

SI16198 |

SI18022, SI18024,

SI18025,SI18029 |

|

Condition handlers in complex nested

compound statements |

SI17232,SI17233

|

SI18929

|

Go Forth

Hopefully, this article has given you a better understanding of the more advanced capabilities of DB2 SQL PL. This robust and mature implementation of the SQL-based programming language can significantly reduce the cost of database porting projects as well as facilitate modernization of existing iSeries applications. The Rochester development lab intends to provide exciting functional and performance SQL PL improvements in the future releases of i5/OS, so stay tuned!

Additional Info

The following publications can be helpful to those who want to learn more about the DB2 SQL Procedural Language:

- DB2 SQL PL Essential Guide for DB2 UDB on Linux, UNIX, Windows, i5/OS, and z/OS, IBM Press, ISBN 0-13-147700-5

- Cross-Platform DB2 Stored Procedures: Building and Debugging, ITSO Redbook, SG24-5485

- Stored Procedures, Triggers and User Defined Functions on DB2 Universal Database for iSeries, ITSO Redbook, SG24-6503

Jarek Miszczyk is the Senior Software Engineer, PartnerWorld for Developers, IBM Rochester. He can be reached by email at

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment.

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment. TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now.

TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now. Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works.

Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works. Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include:

Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include: Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new.

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new. IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

LATEST COMMENTS

MC Press Online