Cable clutter, white noise, and even your carbon footprint can be reduced via virtualization, but is the technology ready for prime time?

Back at the beginning of the year, I talked to you about virtualization and specifically about VMWare. At the time, I wanted to see if I could find some way around the artificial limitation that IBM had imposed on my expensive xSeries machine (namely, that you couldn't run high-resolution graphics on it). The results were mixed; it worked but not quickly, nor was it particularly stable. At the end of the research, my conclusion was that for high-resolution graphics, virtual reality was a nice place to visit but I wouldn't want to live there.

I'm going to return to that product again, but this time I'll address a different issue: server sprawl.

Well, to Be More Precise, Workstation Sprawl

In my case, I don't have that many servers. Besides my two System i machines, my primary server is the xSeries, which has turned into a very expensive but very fast network-attached storage device. But in the meantime, I have suffered from some serious workstation sprawl.

I have my old workstation, a P4 Windows 2000 machine from the turn of the millennium. It rarely gets powered up, but it has information that I might need some day. Next, I have my previous workstation, a pretty hefty W2K Pro machine I had built back in 2003 by a local computer shop. That in turn was replaced by two machines a couple of years ago: an XP Home Internet machine (primarily for email, surfing, and writing) and an XP Pro development machine (where WDSC lives). The development machine, by the way, was intended to be a medium-level gaming and home theater machine, but it got co-opted when the W2K Pro workstation got hit hard by a virus.

Add into that mix a little Linux box running email, and basically I have six PCs in my office: two servers and four workstations. That's a lot of real estate, and a lot of cabling, and just a lot of clutter, even if two of the machines rarely get powered up. And really, the two machines that rarely get used are something of a crap shoot each time I apply power; there will come a day for each one when I'm going to get a disk error and then that machine moves from Workstation Row to the Land of Dead PCs (the upper shelf of the office closet).

P2V to the Rescue!

Ah, how did we get this far without a TLA (a "three-letter acronym" in case you just woke up, Rip Van Winkle)? Well, technically this isn't a TLA, because the middle character is a digit, not a letter, but that's a quibble. P2V is the industry acronym for physical-to-virtual, and it describes the process of taking a living, breathing physical machine and sucking it into a "virtual machine" that can then run somewhere else.

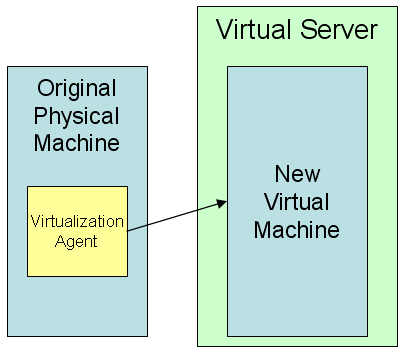

Figure 1: This is virtualization in a nutshell.

In general terms, virtualization is the process of loading software on a physical machine that in turn creates an image of the machine that can then be run somewhere else. Almost exclusively, the virtual server and the original physical machine need to be using the same microprocessor. In theory, you can run software from one processor in an emulator on another processor, but that sort of cross-processor emulation is rare and is usually quite slow.

Far more common is the ability to run one machine inside a virtual workspace on another machine with the same processor. The virtualized operating system is called a "guest OS" as opposed to the "host OS." For example, you can run a guest version of Linux inside a Windows host OS. This is not dual booting; with the appropriate software, you can copy and paste between the two operating systems and technically even run a client program on one OS that talks to a server program on the other.

This basic procedure can be accomplished several ways, some of which can be tied directly into your backup and recovery procedures. If, for example, your backup process includes a product like Norton Ghost to periodically image your machine, you can take that backup image and then use a tool to convert that into a virtual image.

Other options include boot disks or installed software that will take the contents of your machines and format them into a virtual machine on a network server. Each of these requires some downtime, and depending on the size of your disk drives, your network speed, and so on, it can take many hours. That's why it's reasonable to think about incorporating this into your backup plan, particularly if the virtualization tool requires your machine to be unavailable during the process.

Virtualizing a Multi-Machine Network

Virtualization has a variety of uses, from backup to testing to deployment. A perfect example is my little mail server. It's a small Linux machine doing nothing but sitting there. It takes up space and power and has more moving parts that can go poof in the night. So it would be to my advantage to be able to convert that to a virtual machine that runs inside of a larger server. A Linux mail server wouldn't require much server CPU power at all, and since its network bandwidth is pretty much limited by my Internet pipe, it's not going to be a drain on my network either. So basically, I could stuff my mail server into a virtual machine on a primary server for the incremental cost of a few GB of disk storage, a half a GB of RAM, and at most a couple of hundred extra megahertz on the CPU (it's rare that network servers are CPU-constrained, at least today).

Other workstations can be converted as well. In fact, it would make sense to convert as many of my machines as feasible into images on the server. Remember, I have two old workstations that are lying around primarily for decorative purposes and the very occasional archive retrieval. I could convert those machines to virtual images that would for the most part lie dormant on disk.

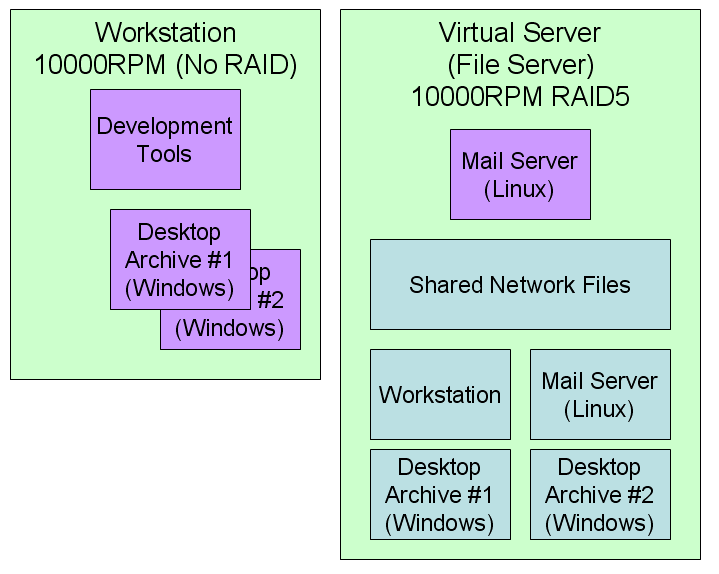

Figure 2: This networked environment has multiple virtualized machines.

If you look at Figure 2, you'll see a workstation that has a high-speed disk drive but limited storage requirements. Basically, that machine would have development software installed and perhaps working copies of current projects. Critical documents would be backed up to the virtual server. When needed, the archived desktop virtual machines could be loaded and run on the workstation.

Note that all of the virtual machine images, including the mail server, the desktop archives, and an image of the workstation would all reside on the virtual server. The workstation image would be updated whenever new software was installed on the workstation and could be used in case of catastrophic failure of the workstation.

Yes, I'd need a powerful workstation, and the archive machines might take a little while to load, but the archival virtual machines are not required to run quickly; they just need to be stable and available when I need them. The file server would use its extra cycles to take care of the mail, and suddenly, three machines are gone! I even become a greener company for going virtual! Heck, the physical machines that aren't too out-of-date can even be donated to a worthy cause.

The Cost of Virtualization

The biggest cost associated with this scenario is wasted disk space on the file server. The virtual images tend to take up a large amount of disk (they are, after all, complete replicas of the entire disk of the old machines), and they are rarely used. Typically, you only use the image when you start up a virtual machine and when you shut it down, and in this environment, the desktop archives certainly are rarely used, and even the mail server might only be rebooted occasionally. If I were to put the virtual images onto a couple of large, redundant near-line disk units, they'd take up none of the expensive real estate on the high-speed disk drives.

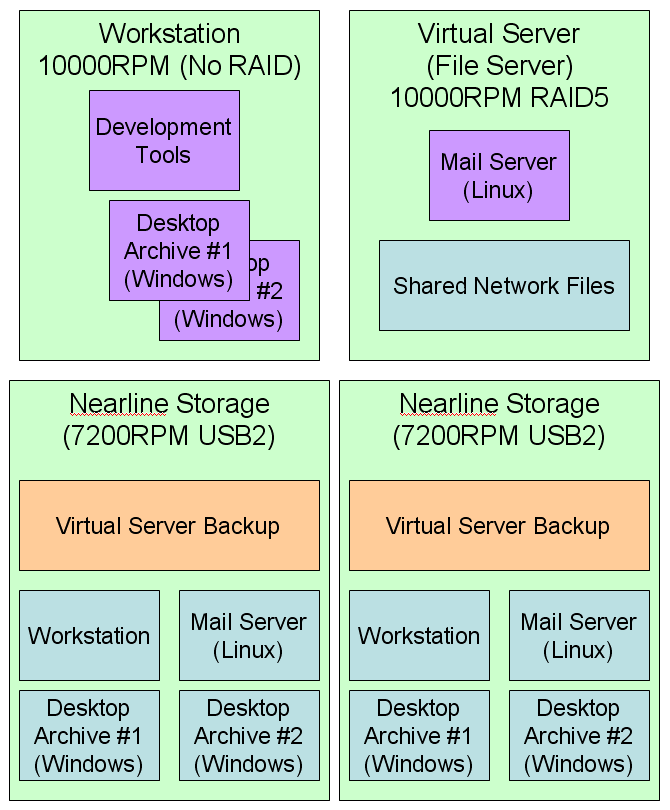

Figure 3: Use inexpensive redundant drives to store the rarely changed virtual images.

I could easily set up a redundant pair of 750GB USB2 drives for under $200 apiece (since nowadays you can get brand name USB-attached external storage at less than a quarter a gigabyte). And I can even use those drives to back up the virtual server; one of those drives could act as a network failsafe in case of catastrophic failure to the file server. Meanwhile, the network server itself is dedicated to file serving and the tiny amount of overhead of the mail server.

Going Virtual

So it's clear that converting my physical machines to virtual machines has a lot of immediate benefit, including reduced clutter and better use of my resources. Other short- to medium-term benefits include the ability to create test versions of my virtual machines. For example, I might want to play around with my mail server. I can do this on a separate virtual machine and not put it into production until I get it entirely working. And even then, if I uncover a catastrophic glitch, I can go back to the previous version of the virtual image. I can do the same thing with my workstation.

Remember, though, that imaging an existing machine is not a trivial exercise. It can take several hours even for a relatively small machine. And while the process has gotten somewhat easier over the years, there is still a level of black magic involved. The free solution for Linux involves using g4l (Ghost for Linux) to create a ghost image and then loading that into a virtual machine. It's a multi-step process and not for the faint of heart.

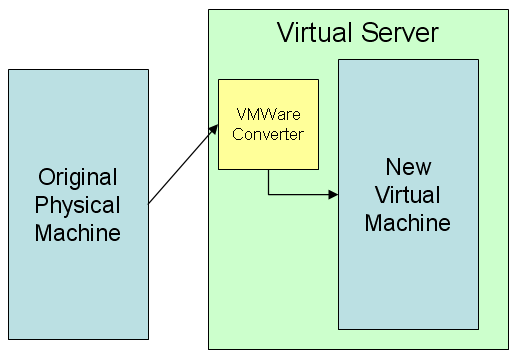

Windows machines can be assimilated a bit easier; VMWare provides a piece of software called VMWare Converter that will suck in an entire physical machine and convert it to a virtual machine with very little operator intervention. I successfully managed to import my oldest machine in about six hours.

Figure 4: Here's one of the cool mechanisms that VMWare provides.

Unfortunately, there are some strict limitations on the automatic import tool, such as the fact that it works only on Windows machines. The other solution, a standalone boot CD, is available only with the commercial version of the product.

A more serious issue for me is the lack of support for SATA RAID setups. My second archive desktop was state of the art in the day, and it has two drives using RAID 1 mirroring. I cannot today make a virtual machine from this using any of the VMWare tools. I'm going to see if I can convince Ghost to do the job, but I'm not hopeful. If I can't virtualize that second machine, it really removes a lot of the glitter for me. Note that I would be perfectly happy removing the RAID capabilities, which should be entirely transparent to the operating system anyway. But a lot of research has so far failed to turn up any answers.

So Will Virtualization Work for Me?

I want to say yes. Just by going through the pictures, you can see that going virtual provides some huge benefits, especially to those of use who have gone through multiple upgrades over the years and have older machines sitting on the network "just in case."

Additionally, virtualization holds a powerful allure when you talk about configuration and deployment. Being able to make major changes (including operating system and other software upgrades) in a virtual sandbox and test them without ever impacting a production machine...well, that's priceless. This is especially true when the cutover can be as simple as switching a table entry in your Network Address Translation (NAT) configuration to move from the old virtual server to the new virtual server.

In the long run, virtualization for the SMB is almost a requirement. The reduced physical infrastructure is only one of the issues. Virtualization becomes even more important if you intend to do a lot of open-source work. That's because many new applications are being made available as virtual appliances (typically as VMWare clients). The biggest problem with having multiple open-source projects is trying to make sure that none of the bits of Application A cause a failure in Application B. The only way to be sure is to run the applications together and keep your fingers crossed, and if you're not an expert in the various technologies, diagnosing any problems can be an exercise in futility. By running them in separate virtual machines, you can avoid any unwanted side effects without having to deploy yet another server machine.

Yes, you need more CPU and RAM resources, but those expenses are more than offset by the savings from having fewer machines or by the reduced disk drive costs on your file server. A good CPU is a couple of hundred bucks, and you can get 4GB of DDR2 RAM on TigerDirect for under $100. Now, you might start running into problems if you run too many virtual machines; 32-bit operating systems still can access only about 4GB of memory. But by the time you need that much virtual memory, your business model ought to support another server or at least a 64-bit operating system.

So I guess that right now I have nothing bad to say about virtualization. My only hesitation is whether or not to recommend VMWare specifically as the Holy Grail of SMB virtualization. And that's not because it's a bad company or because the product is technically deficient. Quite the contrary; the folks at VMWare have done an outstanding job and have carved themselves an undeniable leadership position in the field. However, there are still some rough spots (the lack of RAID support is a big one), and I haven't completely identified all the pieces necessary to be able to make a totally "free" virtual solution.

In fact, I really haven't been able to locate definitive answers on the costs for the various commercial options. I think, but am not 100 percent sure, that Symantec's Ghost software can be used to capture the state of any machine and that this ghost image can then be converted to a virtual machine readable by the (free) VMWare server software. Ghost is only $70, and if that's true, it could be a very cost-effective solution.

And that' s important, because while a number of other virtualization products exists, they have various issues, the most important of which is probably cost. Prices range from about $100 a conversion to the dreaded "contact our sales department," which nearly always means it's too expensive for a small shop.

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment.

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment. TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now.

TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now. Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works.

Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works. Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include:

Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include: Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new.

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new. IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

LATEST COMMENTS

MC Press Online