Remember when you could leave your office at night, lock the door to the computer room, and feel secure in the knowledge that your company's information system was safe? System security back then was in the shape of a metal key: something you could slip into your pocket or hang on a hook. Of course, no one needs to tell you how much things have changed for the worse. If your system is hooked up to the Internet (and what business system isn't these days?), the only truly secure thought running through you mind at the end of these days is that someone, somewhere, is trying to break into your system. Password security? Who cares? That's the least of your worries, as email worms and viruses, denial of service, and a host of other network shenanigans are threatening your installation. How bad is it? Consider the following analytic study created at UC Berkeley's International Computer Science Institute (ICSI).

The study, entitled "How to Own the Internet in Your Spare Time," was prepared by three authors for the ICSI Center for Internet Research, and it charts the monstrous threat and rapid rate of infection of the nefarious worms called Code Red, Code Red I, Code Red II, and Nimda. If anyone in your management is dismissive of the importance of Internet security, they should quickly peruse this paper.

Seeing "Code Red"

Worms spread in machines by replicating themselves and then passing their infectious code to other machines. No better example could be cited than the evolutionary rise of the Code Red worms of 2001.

Code Red, you may remember, was a worm that was first observed on July 13, 2001. It spread by compromising Microsoft IIS Web servers. This vulnerability in IIS had been discovered only the month before, and that threat was published on June 18 by Eeye Digital Security. Once Code Red infected an IIS host, it spread by launching 99 threads that generated random IP addresses; then, it tried to compromise those IP addresses using the same vulnerability. These newly infected IIS hosts then repeated the cycle, over and over again, defacing the Web sites of every machine that it touched.

Fortunately, the initial version of Code Red (which later became known as CRv1) had a bug: The random number generator was apparently initialized with a fixed "seed" number, so that all the generated copies of the worm in a particular thread, on all hosts, generated and attempted to compromise exactly the same sequence of IP addresses. As a result, CRv1 never compromised an excessive number of machines because it had a linear path of progressive infection. While this didn't give much solace to any company that became infected, it was significant in that the CRv1 path didn't scatter as quickly as it might have. Still, as fast as Microsoft attempted to identify the means of preventing the infection, the author of the worm was faster.

Indeed, several days later, on July 19, 2001, a second version of the Code Red worm began to spread--something that had been hypothesized in academic circles via mailing list discussions about CRv1. This second worm became known immediately as Code Red I version 2 or--more cryptically--CRv2. CRv2 used the same code base as CRv1, but it used a true randomly generated number without a fixed seed number. It did not deface Web sites, though. Instead, it had a DDOS payload that targeted the White House's Web address. This new version spread exceptionally fast until almost all vulnerable IIS servers on the Internet were compromised. However, it turned itself off at midnight that night and then lay dormant until August 1, 2001. Code Red I has continued to activate on a monthly cycle ever since and--based upon the research presented in the study--continues to gain strength probably because of its relatively benign impact and Webmasters' notorious inattention. Still, it remains a potent act of Internet terrorism because it had a specific purpose: attack the White House Web server.

Imagine, if you will, the impact that such a worm might have had on your business had your company's Web site had been the target. Imagine how redolent the impact was on the infrastructure of the Internet itself.

But Code Red and Code Red I were nothing compared to Code Red II.

Localized Scanning--Code Red II

The Code Red II worm was released on August 4, 2001--less than a month after Code Red I. It actually contained a comment in the code that stated "Code Red II," but according to the authors of the study, it didn't contain any of the base code from the original Code Red worms. It did, however, use the same vulnerability of the IIS, and it installed a back door on the server that allowed unrestricted access to the infected host. (According to the authors, this back door worked only if the IIS was running Microsoft Windows 2000. On Windows NT, it caused the server to crash.) It also used a scanning technique whereby it searched the local network topology for other vulnerable hosts and then quickly spread onto those machines as well. This meant that, once the worm had penetrated the firewall, it was able to quickly replicate itself throughout the entire IIS intranet.

The authors of the study tried to chart the theoretical speed by which Code Red II infected the Internet, but they ran into a number of obstacles: First, because of Code Red II's localized scanning technique, its speed through an IIS topology can't be pinpointed: An infection in one server farm could suddenly burst out across the Internet as a massive bloom of infection. Second, since it used the same vulnerability within the IIS as Code Red I, it was in fact competing against the first worm's actions, defying their ability to analyze its maximum velocity as it spread.

However, more to the point, what Code Red II showed was that we had entered into a new realm of cyber terrorism. Not only had the original worm evolved, but it was now in active competition with other worms. Furthermore, the authors of these devices were learning: They were using a variety of techniques to maximize the speed of the infection, improvising much as an artist might improvise on a theme.

This improvisation--the incorporation of newer and more potent techniques--was realized even more forcefully in the infections created by a multi-vector worm that has become known as Nimda.

Nimda--It's Full Function Is Still Not Known

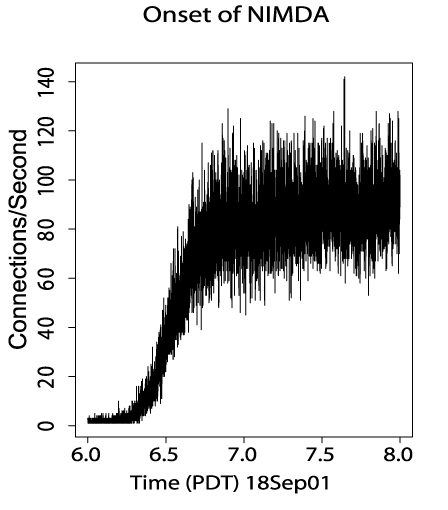

Nimda began on September 18, 2001--only a month after the introduction of Code Red II. It spread with extreme ferocity and managed to sustain itself on the Internet for months after it started. It spread extensively behind firewalls and illustrated the skill with which designer worms were being created. According to the study, Nimda is thought to have used at least five different methods to spread itself:

- By infecting Web servers from infected client machines via active probing for a Microsoft IIS vulnerability

- By bulk emailing of itself as an attachment based on email addresses determined from the infected machine

- By copying itself across open network shares

- By adding exploit code to Web pages on compromised servers in order to infect clients that browse the page

- By scanning for the back doors left behind by Code Red II and by another worm called the "sadmind" worm

There is also something that the authors call "an additional synergy" or adaptation to the real world of Internet connectivity. Many of today's firewalls allow email to pass untouched--without screening--through the firewall. Instead, these installations rely on the mail servers to remove viruses and worms from the packets that have been sent. However, some of these servers scan only for viruses based upon particular code signatures contained in the packets themselves, and they are not particularly effective during the first few minutes--or in some cases, hours--of an outbreak. In other words, they only scan for "known" viruses and worms, so they are not particularly effective when a new culprit is attacking. This is plenty of opportunity and time for Nimda--or another unknown device--to cross the firewall and scatter through the entire realm of internal networks. In other words, as an example of creativity, Nimda was custom-designed to maximize its impact by tailoring itself to the real world of Internet connections today within organizations.

Still, more importantly, the authors of this study readily admit that no one but the authors of Nimda know what Nimda's full functionality is designed to do. It's still out there, and nobody knows what it's going to do. All that is known is that Nimda is continuing to spread, and it's spreading quickly. Who knows the havoc that is ticking away in this device as it awaits the right trigger or time to ultimately activate?

A Cyber Center for Disease Control

The rate of infection by Code Red worms and the Nimda worm/virus are just beginning to be studied, and these are just four of the hundreds of nefarious devices that are currently being constructed by shadowy Internet hackers. Consequently, if you feel that your firewall or antivirus software is protecting your installation, you'd better wise up and pay attention: The authors of the study believe that the techniques used by these worms are just the beginning of an epidemic that is bound to become more virulent and difficult to handle. Most importantly, these worms are still primitive and still evolving at a tremendous rate. Today, a well-crafted worm could, according to the authors, take advantage of every topology and every security loophole to reduce the time that it takes to infect the entire Internet from days down to seconds. In their conclusion, where they call for the establishment of a Cyber "Center for Disease Control," the authors state that "better-engineered worms could spread in minutes or even tens of seconds rather than hours, and could be controlled, modified, and maintained indefinitely, posing an ongoing threat of use in attack on a variety of sites and infrastructures. Thus, worms represent an extremely serious threat to the safety of the Internet." And while iSeries and AS/400 sites have, in the past, been relatively isolated and immune to such infections, that margin of safety is quickly being eroded as more systems become vulnerable through the use of attached server devices and the proliferation of email.

If, as the authors' believe, we are at the edge of infection, what are we to do?

First of all, the obvious chore of locking down IIS and maintaining constant vigilance to viral alerts is essential.

Second, enforcing security at our sites--through every known means of protection--must become a primary requirement of both IT and management.

Finally, educating our users to the dangers of rapid infection is preeminent. Alerts, notices, memorandum, and announcements about new infections must be quickly distributed--and not by email or electronic means that might propel the epidemic. As absurd and difficult as it may sound, a series of telephone calls or other means of alert may be the only recourse IT has to notify everyone of the infection. The word must be broadcast whenever there is suspicion that a machine or network has become infected. Of course, shutting down the network will always get peoples' attention too, but it might be arguably better for IT to shut it down than to have a worm do it for you.

It's clear that the topic of security these days can no longer conjure up feelings of well-being or peace of mind: It has become a nightmare vigilance from which all of us desire a key by which to escape. How we will obtain that key is still difficult to ascertain.

Thomas M. Stockwell is the Editor in Chief of MC Press, LLC. He has written extensively about program development, project management, IT management, and IT consulting and has been a frequent contributor to many midrange periodicals. He has authored numerous white papers for iSeries solutions providers. His most recent consulting assignments have been as a Senior Industry Analyst working with IBM on the iSeries, on the mid-market, and specifically on WebSphere brand positioning. He welcomes your comments about this or other articles and can be reached at

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment.

More than ever, there is a demand for IT to deliver innovation. Your IBM i has been an essential part of your business operations for years. However, your organization may struggle to maintain the current system and implement new projects. The thousands of customers we've worked with and surveyed state that expectations regarding the digital footprint and vision of the company are not aligned with the current IT environment. TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now.

TRY the one package that solves all your document design and printing challenges on all your platforms. Produce bar code labels, electronic forms, ad hoc reports, and RFID tags – without programming! MarkMagic is the only document design and print solution that combines report writing, WYSIWYG label and forms design, and conditional printing in one integrated product. Make sure your data survives when catastrophe hits. Request your trial now! Request Now. Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works.

Forms of ransomware has been around for over 30 years, and with more and more organizations suffering attacks each year, it continues to endure. What has made ransomware such a durable threat and what is the best way to combat it? In order to prevent ransomware, organizations must first understand how it works. Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include:

Disaster protection is vital to every business. Yet, it often consists of patched together procedures that are prone to error. From automatic backups to data encryption to media management, Robot automates the routine (yet often complex) tasks of iSeries backup and recovery, saving you time and money and making the process safer and more reliable. Automate your backups with the Robot Backup and Recovery Solution. Key features include: Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new.

Business users want new applications now. Market and regulatory pressures require faster application updates and delivery into production. Your IBM i developers may be approaching retirement, and you see no sure way to fill their positions with experienced developers. In addition, you may be caught between maintaining your existing applications and the uncertainty of moving to something new. IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

IT managers hoping to find new IBM i talent are discovering that the pool of experienced RPG programmers and operators or administrators with intimate knowledge of the operating system and the applications that run on it is small. This begs the question: How will you manage the platform that supports such a big part of your business? This guide offers strategies and software suggestions to help you plan IT staffing and resources and smooth the transition after your AS/400 talent retires. Read on to learn:

LATEST COMMENTS

MC Press Online